What is photometric redshift?

The photometry of a galaxy is the integrated energy of its emitted light. It summarizes the full spectrum as magnitudes measured in different bands. This is a common technique for estimating the distance to a galaxy (called redshift) as band magnitudes vary when the spectrum is redshifted.

Two classes of photometric redshift (photo-$z$) methods exist. Template-fitting methods compare observations with differently redshifted spectrum templates. Machine-learning (ML) methods resolve a regression problem based on training samples.

How realistic is the photo-$z$ from machine learning?

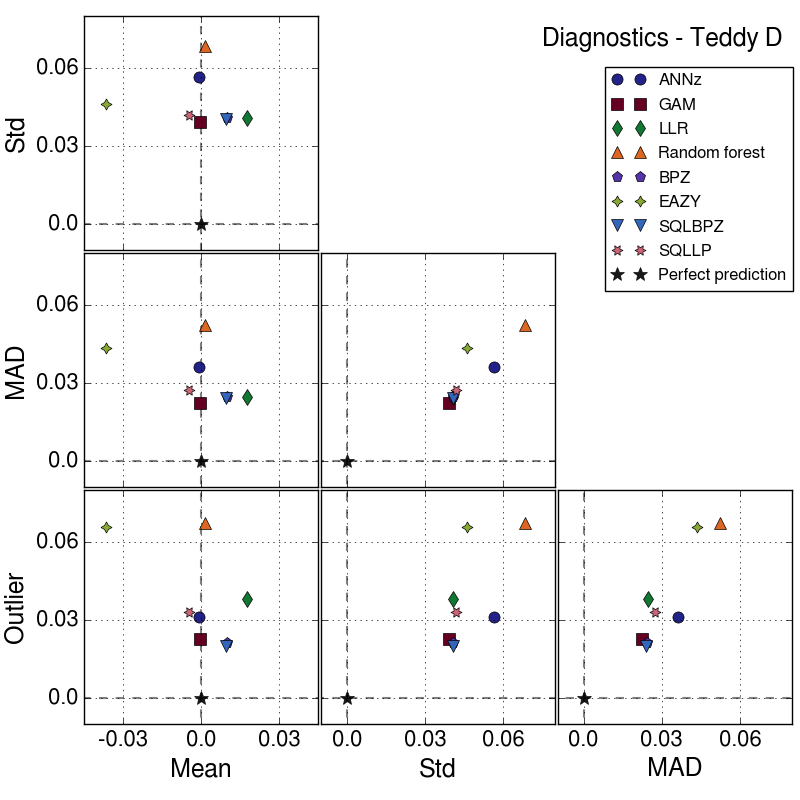

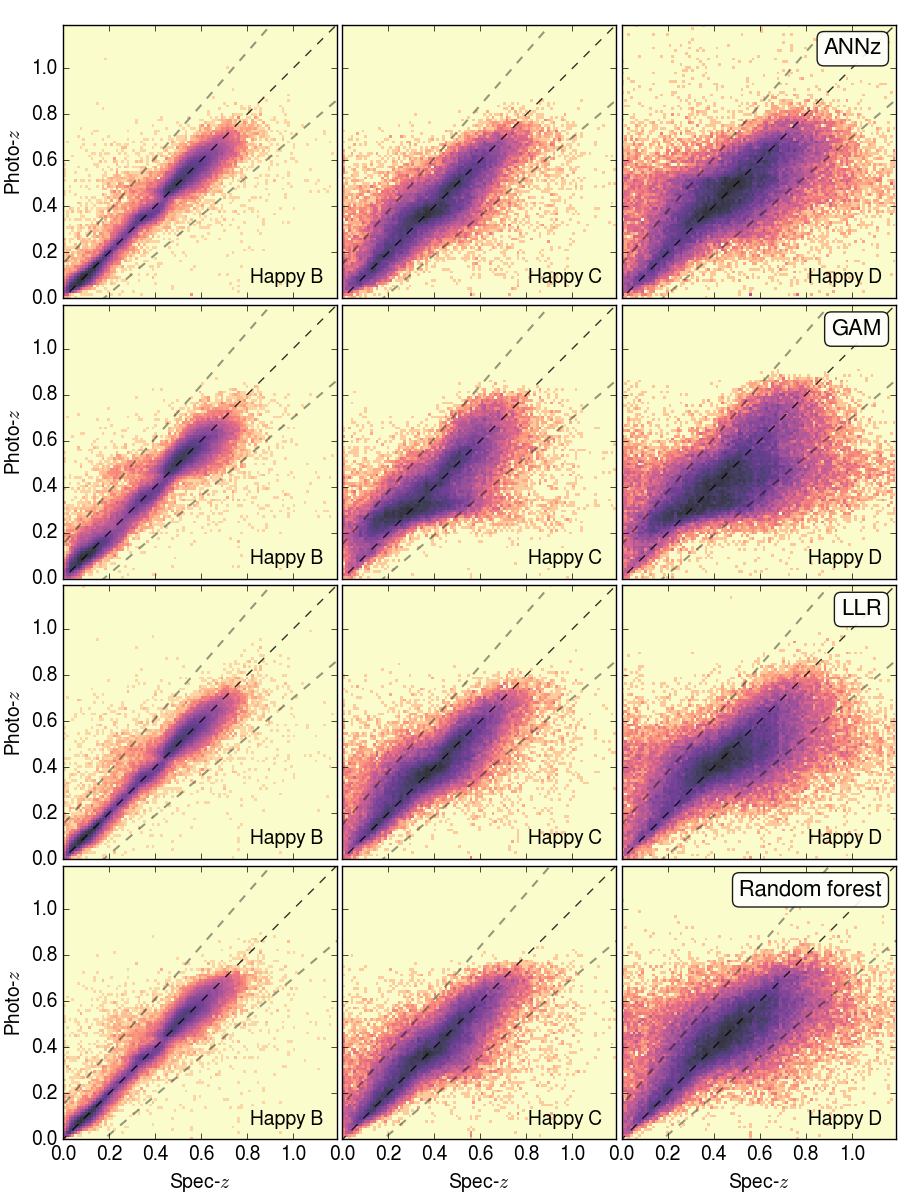

Recent studies of photo-$z$ using ML claimed to reach very high precision. However, predictions from ML would be biased if feature distributions of the training and testing sets are different. This is the case of photo-$z$ when we train on spectrum-confirmed samples and test on faint galaxies in general.

In our study, we showed that the real uncertainty of photo-$z$ using ML is much larger than believed. We quantified this difference and suggested that if a survey can measure more spectra of faint galaxies, the estimation could be largely improved.

We also found that magnitude errors affected photo-$z$ estimations and could not be corrected by reweighting. Thus, ML methods should include magnitude measurement uncertainty in their error budget.